Uncertainty as a Swiss army knife new adversarial attack and defense ideas based on epistemic uncertainty

Adversarial Machine Learning attacks and their mitigation methods

Publication: Springer - Complex & Intelligent Systems

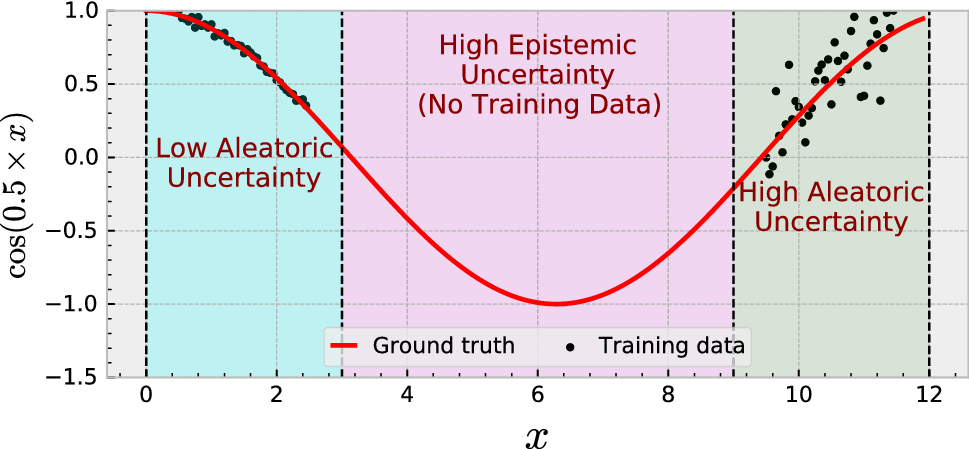

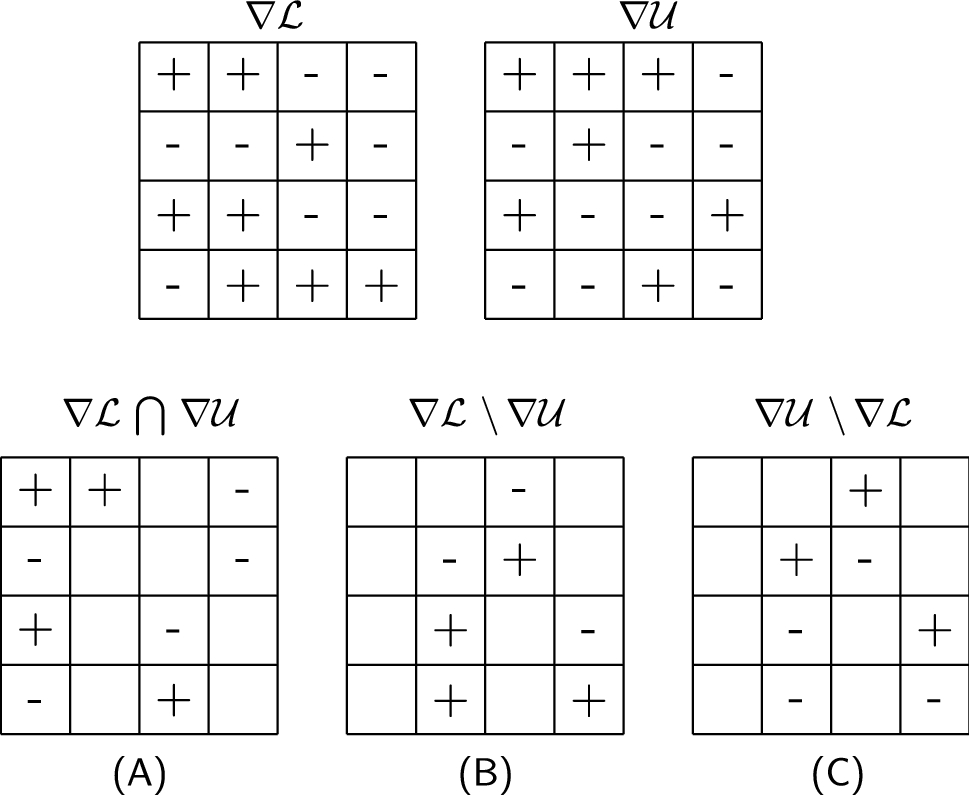

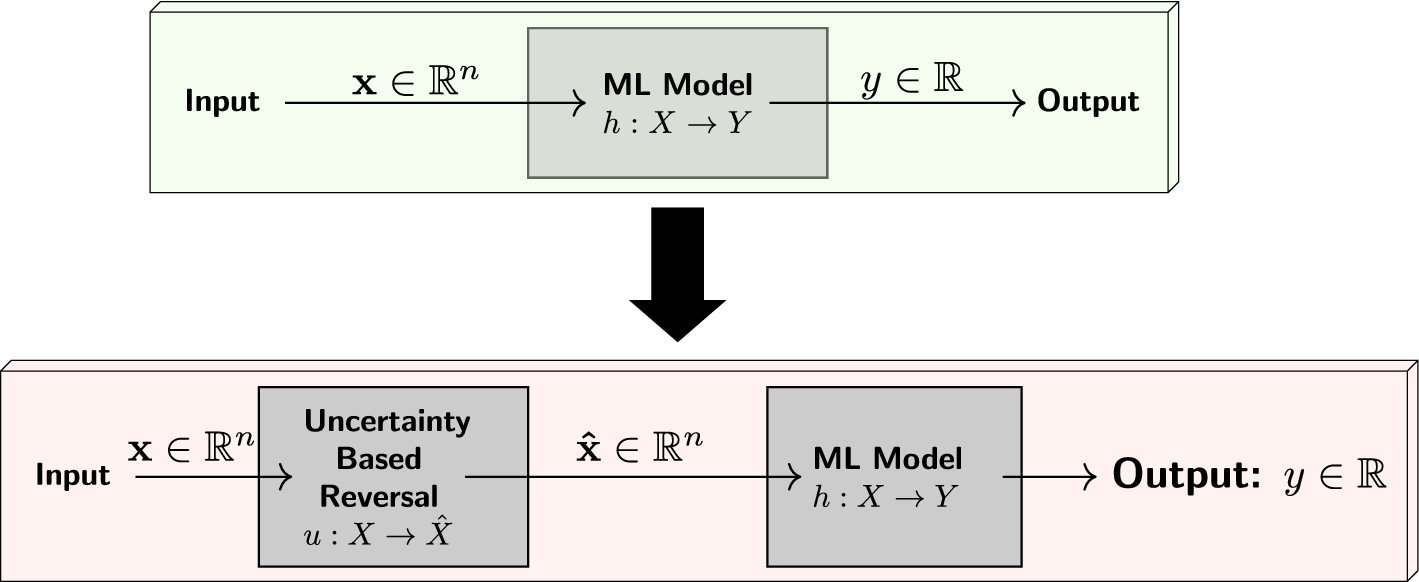

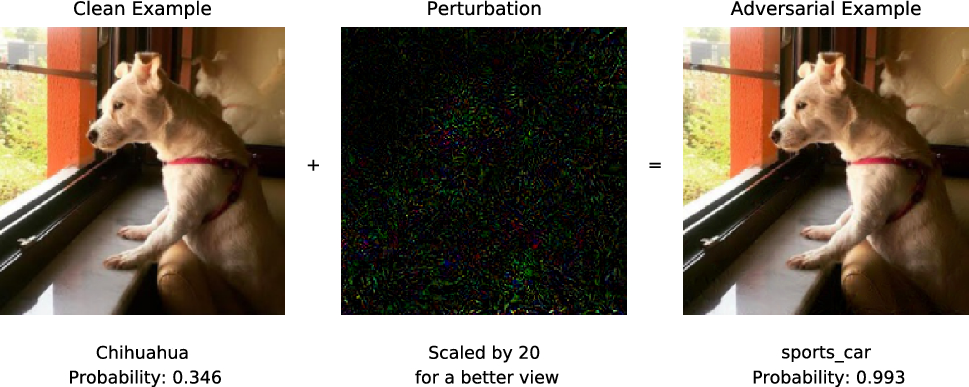

Abstract. Although state-of-the-art deep neural network models are known to be robust to random perturbations, it was verified that these architectures are indeed quite vulnerable to deliberately crafted perturbations, albeit being quasi-imperceptible. These vulnerabilities make it challenging to deploy deep neural network models in the areas where security is a critical concern. In recent years, many research studies have been conducted to develop new attack methods and come up with new defense techniques that enable more robust and reliable models. In this study, we use the quantified epistemic uncertainty obtained from the model’s final probability outputs, along with the model’s own loss function, to generate more effective adversarial samples. And we propose a novel defense approach against attacks like Deepfool which result in adversarial samples located near the model’s decision boundary. We have verified the effectiveness of our attack method on MNIST (Digit), MNIST (Fashion) and CIFAR-10 datasets. In our experiments, we showed that our proposed uncertainty-based reversal method achieved a worst case success rate of around 95% without compromising clean accuracy.

Bibtex Information

@article{tuna2022uncertainty,

title={Uncertainty as a Swiss army knife: new adversarial attack and defense ideas based on epistemic uncertainty},

author={Tuna, Omer Faruk and Catak, Ferhat Ozgur and Eskil, M Taner},

journal={Complex \& Intelligent Systems},

pages={1--19},

year={2022},

publisher={Springer}

}